-

Type:

Bug

-

Resolution: Incomplete

-

Priority:

Major

-

Component/s: durable-task-plugin

-

Environment:jenkins: v2.190.2

durable-task: v1.33

As mentioned in this bug I wrote some days ago:

https://issues.jenkins-ci.org/browse/JENKINS-59838

I have updated Durable Task Plugin to v1.33 but I am still facing the same issue.

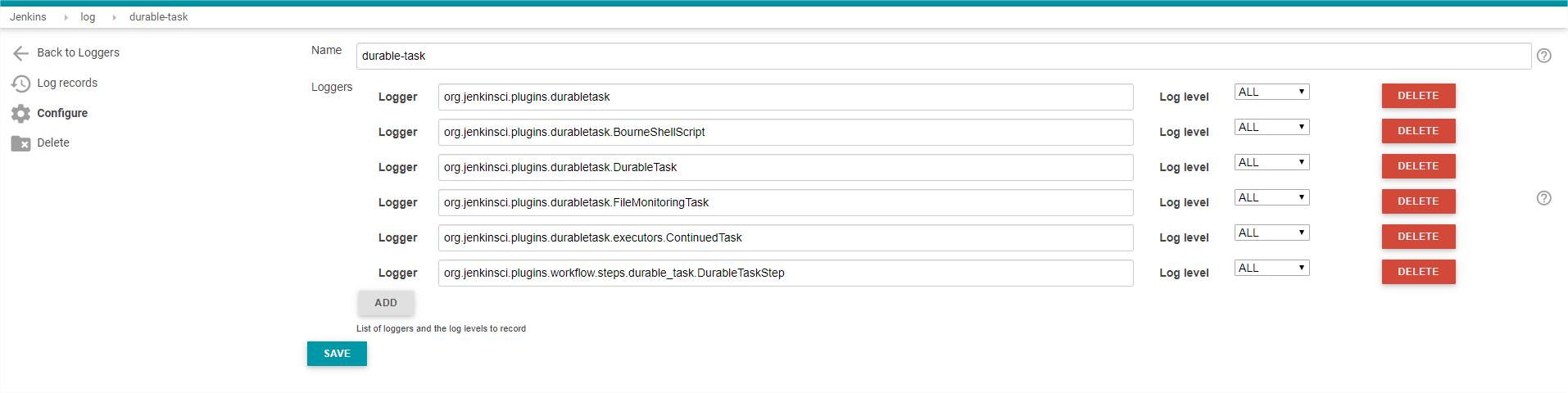

I have captured the logs on "System Log"

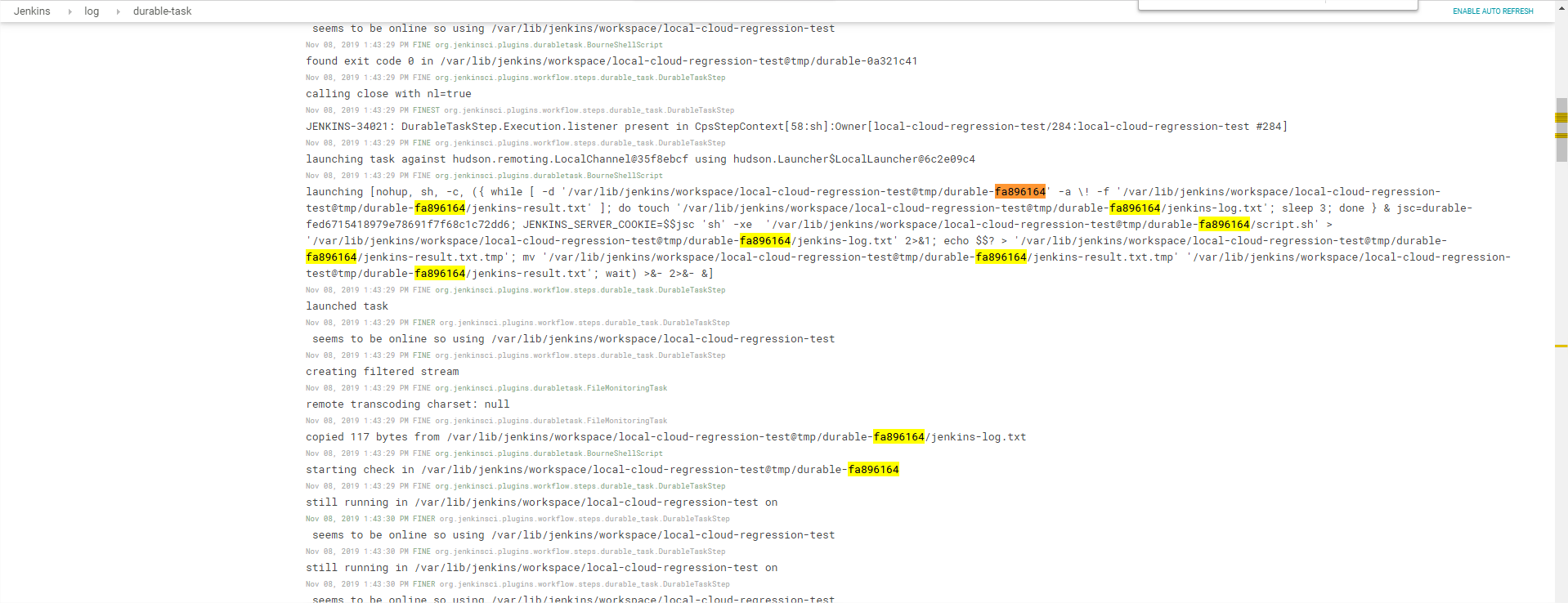

The output I get from that log does mean to much to me:

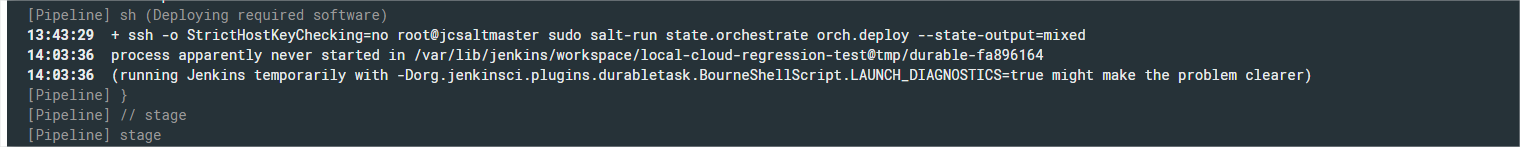

Here the error appeared on the job console:

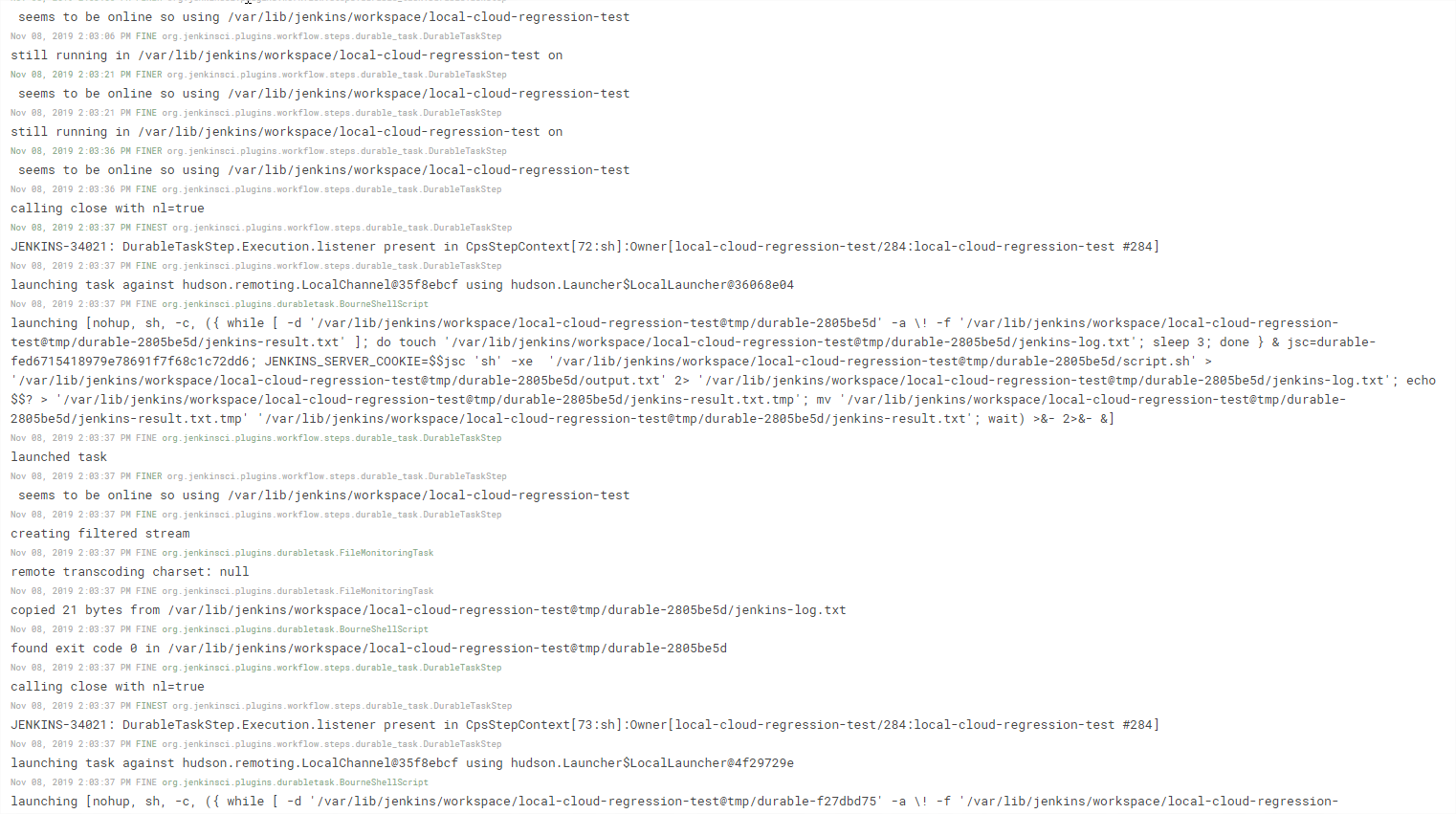

And the "system log" changed to:

This is being a great headache because I always get that error.